Motion Graphics with AI in 2026: How the Production Pipeline Has Changed

Running a motion graphics studio means I feel every shift in production tools immediately — in project timelines, in team conversations, in client expectations. The AI transition in motion design hasn't been gradual. In the past eighteen months it's been a step change, and the production pipeline my studio runs today looks fundamentally different from what we were doing in 2024.

The pre-production phase: dramatically faster, differently structured

Pre-production used to be where we spent the most time. Concept development, style frame creation, animatic production — each of these was a significant labor investment before a single final frame was produced. AI tools have compressed this phase substantially, but not by eliminating the work. By changing its character.

We now generate 20 to 30 style frame concepts in the time it previously took to produce three or four. That sounds like pure efficiency gain, but it creates a new problem: clients get more options, which means more decisions, which means longer approval cycles if you don't manage the process carefully. More choices isn't always faster.

The animatic phase has changed the most. AI video generation lets us produce rough motion tests from style frames quickly enough that the animatic and first-pass production now overlap in ways they never did before. The boundary between pre-production and production has blurred.

Asset production: what changed and what didn't

Illustration and background asset production is where AI integration has created the most concrete time savings in our pipeline. Environments, textures, decorative elements, supporting graphics — these categories of assets used to require significant illustration hours. AI tools now handle a substantial portion of this work.

Character animation hasn't changed as much as the hype suggests. Consistent character design across a long-form piece still requires human illustration and rigging. AI tools produce interesting single-character shots but struggle with maintaining visual consistency across a sequence — same character, same proportions, same details across 40 scenes. That continuity work is still manual.

What's genuinely new in 2026 is AI-assisted compositing. Tools that can intelligently rotoscope, match lighting, and suggest blend modes are reducing post-production time in ways that weren't possible two years ago. This is where I see the most continued potential for pipeline efficiency.

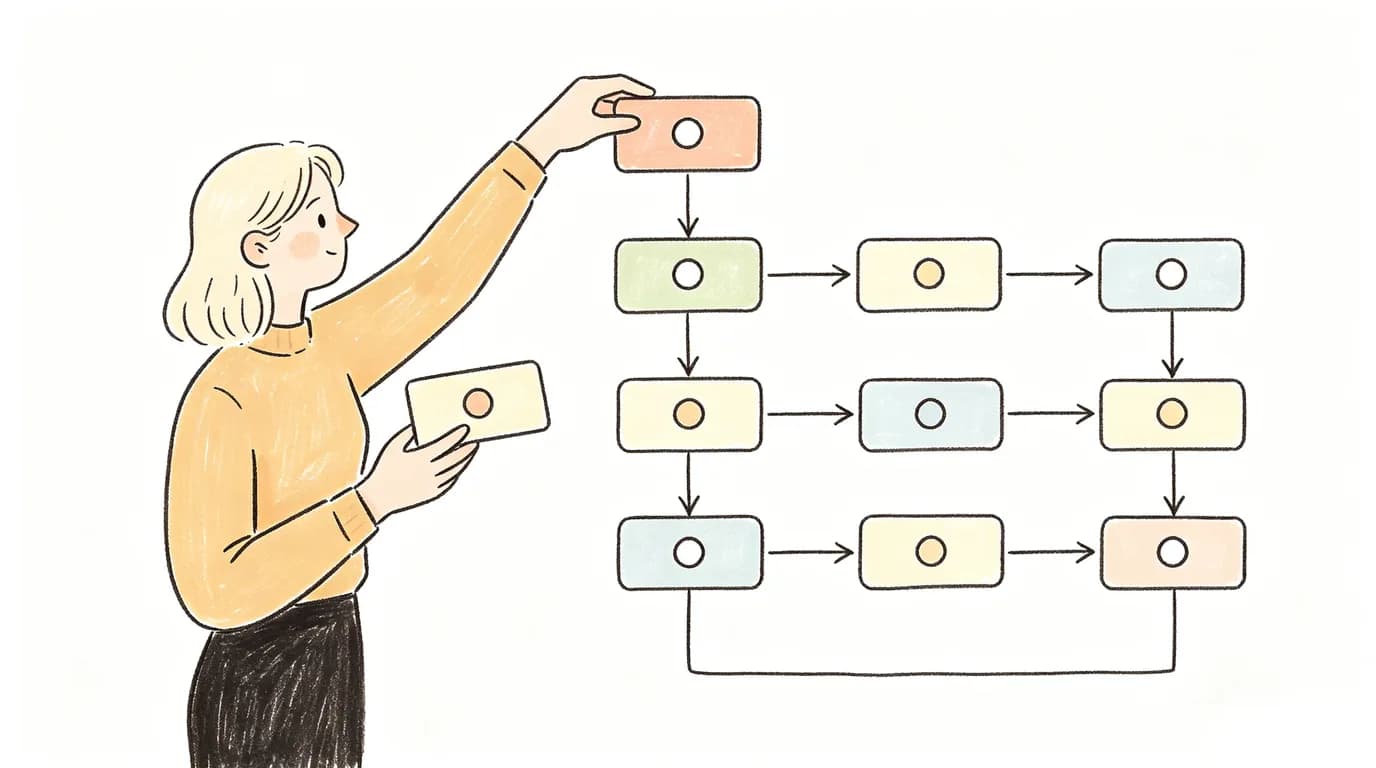

How team structure has adapted

We've restructured our team around two roles that didn't exist at our studio three years ago: an AI prompt specialist who knows the generation tools deeply enough to consistently produce production-ready outputs, and a quality review role specifically focused on AI output — someone who can quickly identify temporal artifacts, consistency errors, and off-brand elements before they reach client review.

The traditional illustrator and animator roles still exist, but their focus has shifted. Illustrators spend more time on creative direction and quality control of AI outputs, less time on ground-up asset production. Animators focus on complex character work and timing precision — the areas where AI is still unreliable.

Client expectations have changed too

Clients in 2026 have seen AI video and they have opinions about it. Some actively request AI-generated aesthetic elements. Others specifically ask that character work be hand-illustrated because they want to differentiate from AI-generated content. Neither position is wrong — they're just different creative and brand decisions.

What's changed in our client conversations is the baseline of what's expected in a pitch. Clients now expect to see motion references, not just static style frames, at the proposal stage. That expectation would have been unreasonable to meet two years ago. Today it's a standard part of the pitch process, made possible by fast AI video generation.

What I think the pipeline looks like in two more years

The direction is clearer than the timeline. The human role in motion design is moving from production execution toward creative direction and quality control. This isn't a reduction in creative value — it's actually a reallocation toward the highest-value parts of the craft. The parts that require taste, brand understanding, and storytelling judgment.

The studios that adapt well will be the ones that build AI tools into their workflows without losing the design thinking that makes motion graphics effective. The tools are changing fast. The principles of visual communication aren't.